Optimal Transport Enhances Data Privacy in Diffusion-based Foundation Models

Drs. Mi Jung Park (Computer Science) and Young-Heon Kim (Math) have been awarded the DSI Postdoctoral Matching Fund for their project "Optimal Transport Enhances Data Privacy in Diffusion-based Foundation Models".

Summary

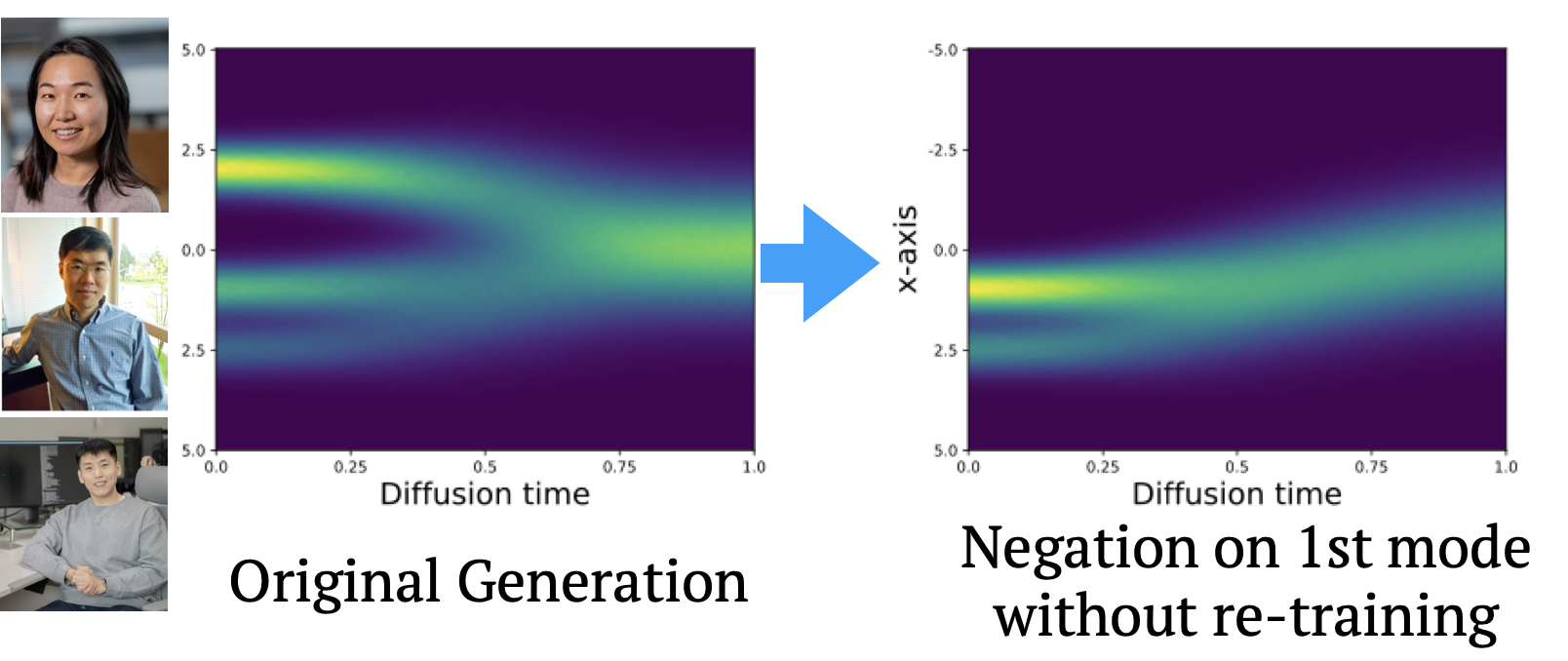

The research proposes a novel approach to “machine unlearning” in diffusion-based foundation models (e.g., text-to-image generators) using optimal transport (OT) theory, addressing the need for data privacy. It highlights that diffusion models often memorize training data, posing privacy risks, and aims to demonstrate how OT can rigorously remove or “forget” specific data points without costly retraining.

Background

Diffusion-based models, used in applications like image and text generation, can inadvertently expose sensitive or copyrighted information, underscoring the necessity for

effective “data negating” methods. Existing official associations like GDPR (European Union’s General Data Protection Regulation) emphasize the “right to be forgotten,” propelling research on machine unlearning to ensure compliant, privacy-preserving AI systems.

Technical Challenge

Retraining large-scale models after removing data is computationally prohibitive, while approximate forgetting methods can degrade performance and lack theoretically

guarantees. Implementing OT in the unlearning process is promising but poses its own complexities, especially for high-dimensional diffusion models, requiring careful algorithmic design and theoretical aspects.

Research Goals

- Develop provable machine unlearning techniques by leveraging OT to measure distances between distributions, ensuring robust removal of private or sensitive data while preserving model capabilities.

- Explore methods such as stochastic sampling strategies and “machine-unlearned sampling” to efficiently erase data distributions, enhance generalization, and maintain or improve performance in generative tasks.